Your Data. Your Models.

Your Datacenter.

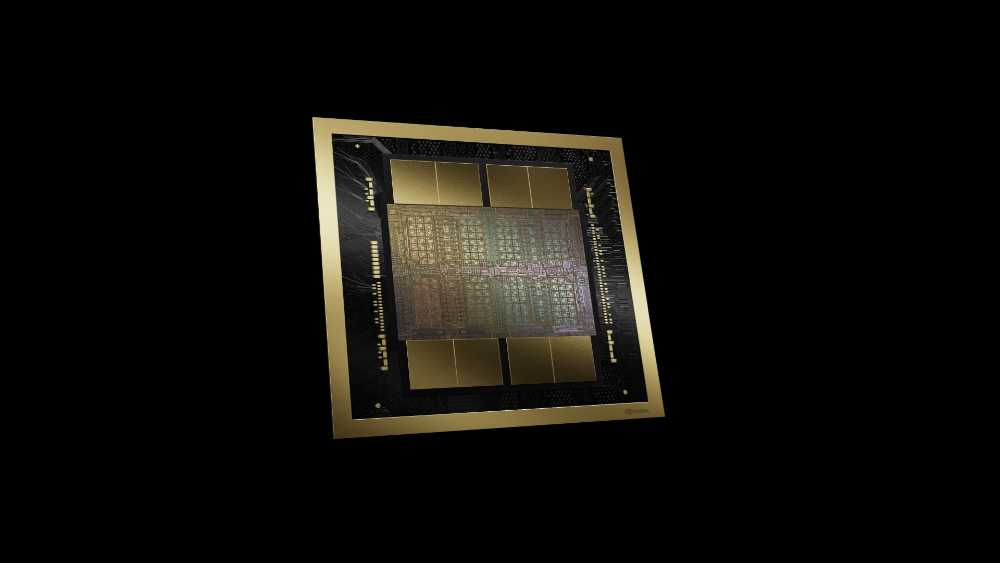

Palantir Technologies and NVIDIA have published a Sovereign AI OS Reference Architecture — a production-ready blueprint that takes organisations from GPU hardware procurement all the way to running live AI applications, while giving them total control over their data, AI models, and applications. The architecture is called AIOS-RA and runs Palantir’s full software suite on NVIDIA’s Blackwell Ultra infrastructure across on-premise, edge, and sovereign cloud deployments.

What Is Inside AIOS-RA?

Click each layer to see what it does and who provides it.

Four Types of Customers AIOS-RA Targets

The architecture is described as particularly critical for organisations fitting one or more of these profiles.

What the Customer Controls

Hover each node to see what organisations own under this architecture. The official announcement confirms enterprises retain total control over their data, AI models, and applications.

In Their Own Words

Directly from the official press release.