OpenAI has announced new voice and image capabilities in ChatGPT, which takes it a step further with its advancement in the technology’s interface. These enhancements enable users to engage in voice conversations and use imagery to show ChatGPT visual references during discussions. The new features will benefit Plus and Enterprise users initially, with plans for broader access in the near future.

Users can now snap pictures of landmarks or their surroundings and have quick conversations about them, expanding the use and application of ChatGPT in everyday life. The introduction of voice interaction enables users to have back-and-forth conversations with ChatGPT, making it a versatile companion for an array of tasks. To activate voice features, users can navigate to Settings → New Features on the mobile app and opt into voice conversations.

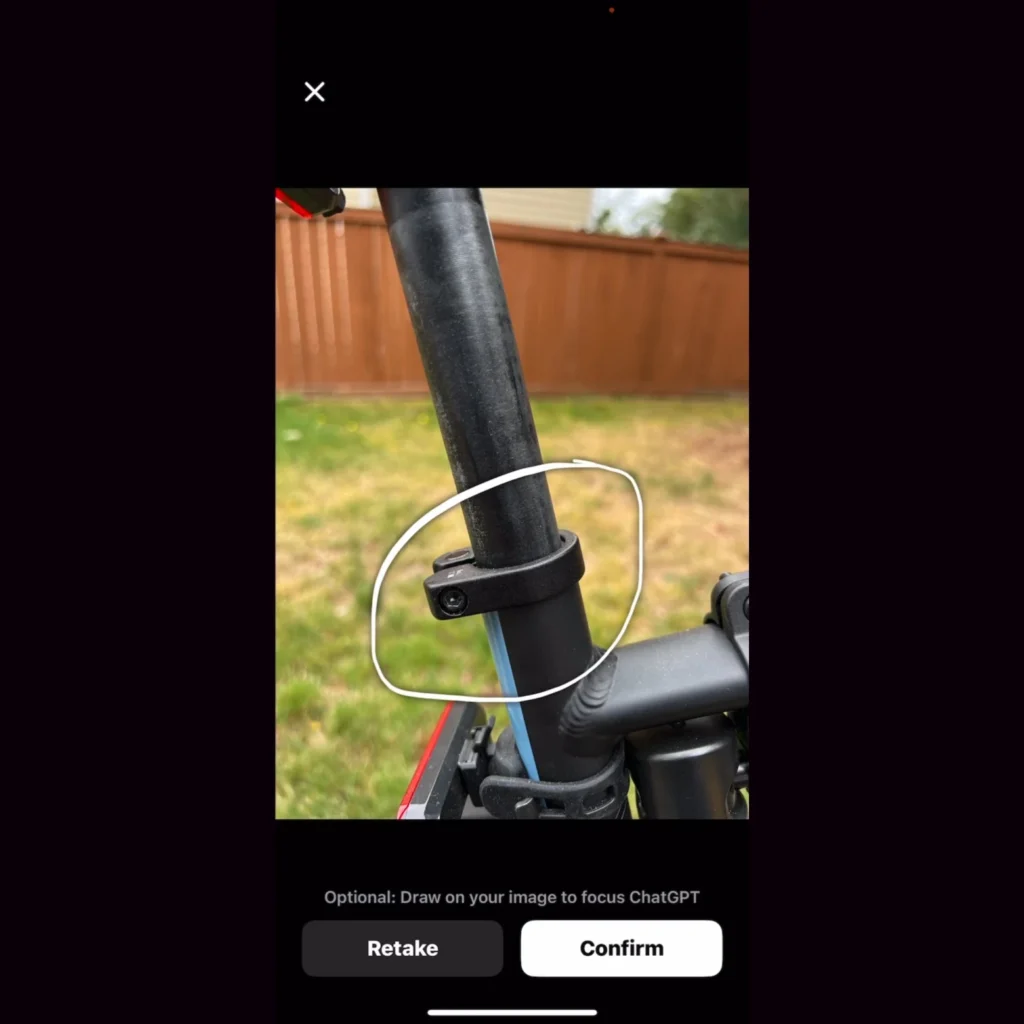

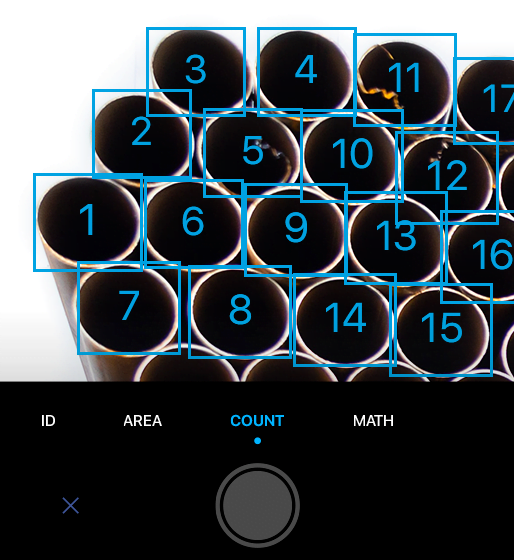

Image interaction allows users to show ChatGPT one or more images, enabling troubleshooting, meal planning, or even complex graph analysis. The drawing tool in the mobile app can be used to focus on specific parts of an image, guiding the assistant for better understanding. Image understanding is powered by multimodal GPT-3.5 and GPT-4, applying language reasoning skills to a wide range of images.

These new features will take ChatGPT further ahead than its current competitors, which are currently focused only on text prompts and outputs. The list of applications using these features could become lengthy or even unending. Many apps currently use phone cameras or image-based inputs to offer users solutions like image-to-text outputs, quick logistical calculations, or even recognizing a certain species.

OpenAI is deploying these advanced capabilities gradually, aiming for safe and beneficial applications while preparing for more powerful future systems. The new voice technology opens doors to creative and accessibility-focused applications but also presents risks such as impersonation and fraud. OpenAI has worked directly with voice actors and companies like Spotify to utilize this technology responsibly and expand its applications.

Similar Posts

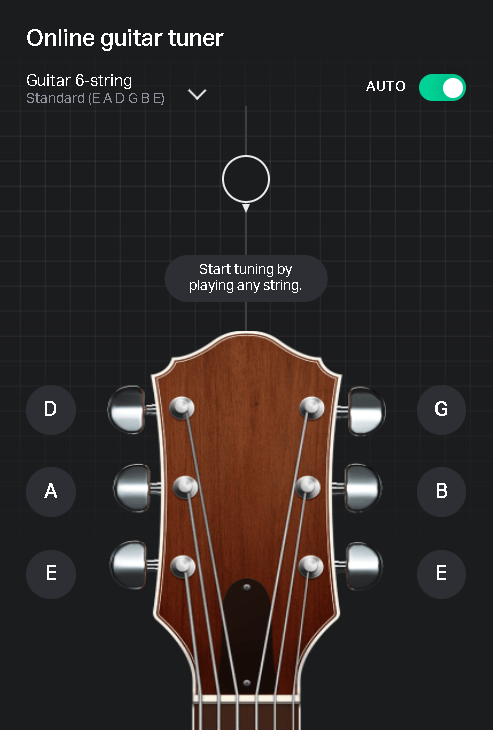

However, voice-based inputs into ChatGPT could save a lot of typing time for the users and make conversations swifter and even easier in some senses. Sound or voice-based inputs can also be applicable in various ways, be it tuning a guitar or learning a new language. OpenAI might be knowingly or unknowingly standing as a challenger to many app makers and software developers who have worked for ages in this domain.

Vision-based models present challenges such as hallucinations and reliance on model interpretation in high-stakes domains. Prior to deployment, extensive testing and research were conducted to align on responsible usage and mitigate the risks associated with image inputs. The vision feature is designed to assist users in their daily lives by seeing what they see, informed by OpenAI’s collaboration with Be My Eyes. The organization reassured that technical measures have been implemented to limit ChatGPT’s ability to analyze and make direct statements about individuals, respecting privacy.

Real-world usage and feedback are crucial for improving safeguards and maintaining the tool’s usefulness. According to OpenAI, it is transparent about the model’s limitations, especially in transcribing non-English text, and advises against higher-risk use without verification. The GPT-4V system card, released on September 25, 2023, provides a detailed analysis of the safety properties of GPT-4 with vision.

OpenAI is exploring new frontiers in artificial intelligence by adding image inputs to large language models, creating more versatile systems. As per OpenAI, the safety measures for GPT-4V are built on those for GPT-4, with extra focus on handling image inputs. OpenAI has also said that it actively manages risks through research and workshops on AI safety. The organization has persistently reaffirmed that it investigates possible misuses of language models and ways to minimize risks. OpenAI aims to innovate in AI while claiming to address important issues and ensure responsible use.